Briefings highlight generational AI scaleups, startups, and projects. I was fortunate to have chatted with co-founder & CEO Brian Raymond. Read on to learn more about his unconventional path from constitutional design expert to AI, how Unstructured came to be, and why it is a generational company.

Unstructured simplifies the process of converting unstructured data into a format usable for AI, specifically focusing on Large Language Models (LLMs). Users simply upload raw files containing natural language to Unstructured's API and receive back clean data, bypassing the need for custom Python scripts, regular expressions, or open-source OCR packages.

Why Unstructured is a generational company:

Traction: Unstructured is already generating several millions of revenue within a year of founding

Large existing market: Extracting data from documents is an existing market and a large problem in search of a much better solution

Seasoned, venture-backed operators: The founders helped scale the NLP startup Primer.ai

Backed by top investors: Madrona and Bain Capital Ventures led their Series A and Seed rounds

An Unconventional path to AI

Brian has one of the most interesting founder backgrounds. Most AI founders today have technical backgrounds, many have PhDs in computer science. Brian was also pursuing a PhD. But instead of studying how computer transistors work, he was studying how countries transition from communism to capitalism. His constitutional expertise got him a fellowship at the CIA, which eventually convinced him to join the agency as an intelligence analyst before completing his degree. A CIA intelligence analyst is responsible for gathering, interpreting, and assessing information related to national security. Each focus on specific subjects like political movements, foreign governments, or emerging technologies. His focus was Iraq. I was curious about what a day-in-the-life looked like for him.

Brian described his routine as: 'I was subscribed to thousands of RSS feeds. Every morning I would come in at 6AM, then read intensively until 8-9AM. I would have hundreds of tabs open. This was what I did. Day in day out.' He maintained this regimen for five years from 2009-14. As ISIS was rising, he was tasked with becoming the Iraq Briefer for the White House and State Department. A Briefer translates the analyst's detailed reports into a digestible format, focusing on key takeaways, implications, and recommendations. Faced with the demanding role of synthesizing multiple analysts' reports, Briefers must be prepared to report to policymakers first thing in the morning. Reflecting on his transition to this role, Brian had mixed feelings. 'I was not thrilled waking up at midnight and going and prepping briefing books for the next six hours. Every morning.'

On his 2nd day as a Briefer, ISIS took over Iraq’s second largest city Mosul. This was a turning point for the international community since it demonstrated that ISIS was a force capable of capturing a large city. The following week, he was asked to serve as Country Director for Iraq at the National Security Council (NSC), which is the US President’s principal forum for national security. Within a week, his role shifted from being an information junkie analyst to policy advisor to the US president.

How is this all relevant to being a startup founder? By age 27, Brian had already honed the skills crucial for a CEO: synthesizing vast amounts of information, managing diverse stakeholders, and making high-stakes, time-sensitive decisions.

From Primer to starting Unstructured

Fast forward to 2018, Brian joined Primer, a startup helping organizations analyze massive amounts of text. There he led the company’s global public sector practice, which at one point made up 75% of the business. As a General Manager, he had to wear many hats from product manager to salesperson to customer success. It was an exciting time according to Brian. Large language models (LLMs) had just started emerging then with the publications of the Transformer (2017) and BERT (2018) papers. And Primer was at the cutting edge of it. With LLMs, Brian was helping intelligence analysts deal with increasing amounts of data. According to Primer, in 1995 an intelligence analyst reads 20,000 words a day. That grew 10x to 200,000 by 2015. In comparison, someone would only be able to go through ~100,000 words if they read at the average speed for 8 hours straight. Knowledge workers face similar information challenges from spending three hours each day searching for information, flipping through 20 browser tabs, and reading five documents to find the one that matters.

The rising amount of information also reflects the diversity of sources and content of files. Ingesting new sources into a machine learning pipeline is a significant engineering effort. Brian faced this challenge constantly while building solutions for Primer’s customers. He looked for a solution for years. First, he canvassed data integration vendors but they were focused on structured data. He then spoke to intelligent document processing companies who dealt with unstructured documents. But each iteration of filetype, layout, language, and medium requires a new data engineering pipeline. The ROI for either group of vendors was low.

Brian left Primer in early 2022 to better understand the problem. Over the next few months, he interviewed almost 100 data scientists and found a common theme: the repetitive use of regex, OCR, and Python scripts for data preprocessing. Data scientists are not rewarded for building preprocessing pipelines but rather for creating models and improving metrics. Convinced of the opportunity in streamlining unstructured data preprocessing—an issue no one seemed keen to solve—he brought on ex-Primer colleagues Crag Wolfe and Matt Robinson to start Unstructured.

What is next for Unstructured

The founders were inspired by the movement they saw in Hugging Face in which they saw thousands of data scientists & developers constantly building and experimenting on the platform. But they know that in spite of having a buffet of models to choose from, data scientists are still hampered by the preprocessing step. Unstructured helps bridge that gap. In addition to what the project already has, a significant investment they are making is in a to-be-released state-of-the-art model called Chipper. It is an OCR-free model that will look at the document, understand the content, and return the content in a nice clean format. Not only will they make Chipper freely available via open-source, they are also optimizing it for CPUs so it can be deployed at scale.

I wondered how are they going to make money — the classic open-source company conundrum. Do they sell services, offer an upgraded proprietary version, or a managed software service. According to Brian, it will be the third. They are going to offer an enterprise-oriented SaaS with user management, nice interface, admin controls, and reliable provisioning. While that does not sound sexy, that is the bedrock of the most successful open source companies today — Databricks, Confluent, Elastic.

Granted that it is convenient to list successful examples with the benefit of hindsight. The cynic would point out that most open-source companies fail to make money. By that measure, Unstructured stands out because it is already making millions in revenue within a year of founding.

The coming months will drive that even higher:

October: Chipper model launch

October: Azure & AWS marketplace listing

November: SaaS offering

If you are a data scientist, check out the project and their free (for now) API service.

Unstructured Links: Website | Github | Blog | Documentation

Product Notes

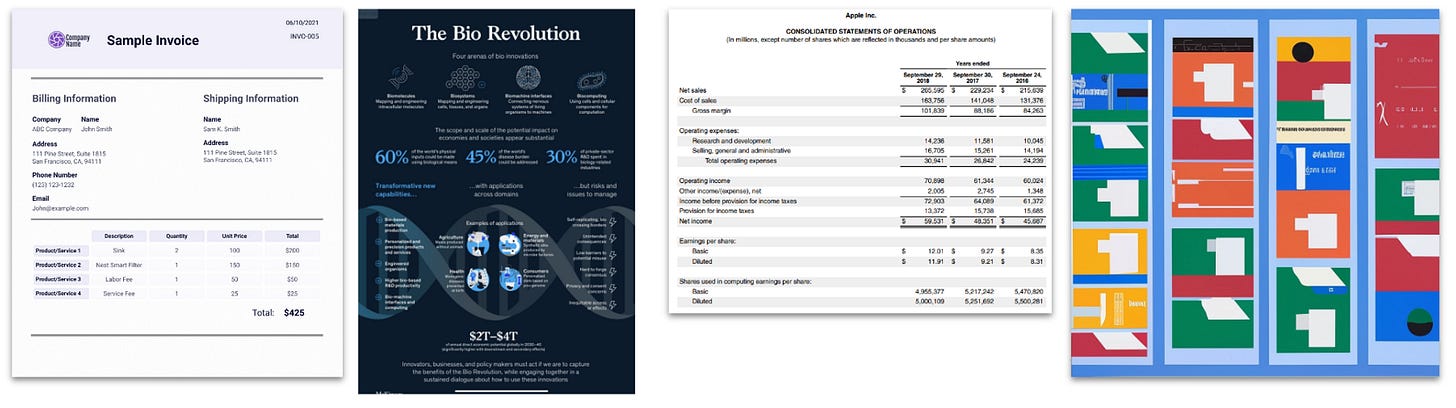

To make generative AI useful for enterprises, models have to incorporate proprietary data whether via finetuning or retrieval augmented generation (RAG). Often, the data is stuck inside documents from reports to presentations to chat logs. Extracting data into a usable format is painful. Let us take digital documents as an example. While most of the world’s documents are standardized into a handful of file formats, there is an infinite variation of formatting, content, and layout inside each one. There is only so much that can be templatized. The image below shows screenshots of documents that are stored as PDFs. But building pipelines to extract data for each would drive data scientists mad, let alone a generalizable pipeline. This is why intelligence document processing companies in the past years can only specialize in one document type or use case (e.g. invoices, NDAs, contracts).

Unstructured helps solve this by offering APIs that can ingest documents regardless of file type, layout, or location and render standardized, clean machine learning-ready data. Data engineers & scientists can build generalizable pipelines for their applications. They break down the process into the following steps:

Ingest: Unstructured can currently ingest over 20 file types. File type determines what kind of data can be extracted. A regular .txt file does not have pages while a .pdf would.

Partitioning: Break down a document down into a primitive set of elements across document types such as title, body text, list-type items, etc.

Cleaning: These elements can then be cleaned for extra white spaces, annoying ASCII characters that break some libraries, and other little annoyances that typically require a bunch of regex rules.

Extraction: Some text or elements that have relevant information such as email addresses, phone numbers, etc. These are important metadata to filter searches.

Staging & chunking: These are “second” mile steps in an application where text is processed further for specific applications. Users can convert the extracted data into a CSV or chunk them into sections. One of the problems with today’s LLM applications is naive chunking, where in a text is split into sections that ignores a document’s information architecture (e.g. section, chapter). Unstructured can intelligently understand the structure to chunk text.

More than just AI applications

Unstructured is valuable even in non-AI use cases. The extracted data can improve information retrieval better by providing additional keywords and metadata to search against. Making an S3 bucket or Google drive full of PDFs searchable is extremely valuable. It is the core value propositions of many vertical startups today.

Chipper model / OCR-free document extraction

Not much yet is known about Chipper today except that is is based on Donut (Document Understanding Transformer) So we go through Donut below instead.

Imagine your typical scanner or a scanning app on your phone. You take a picture of a document—say, an invoice. Traditional systems would first read the text (a process called OCR) and then another piece of software would try to figure out what this text means. Is it a date? An item name? The software needs to piece these together like a puzzle.

Donut does it differently. When you scan the invoice, it does not only read the text; it also 'sees' how the document is laid out. It understands that the bold text at the top is probably a title, the table in the middle lists the items you bought, and the numbers at the bottom are the total cost. It is like glancing at a page in a book and understanding the whole story, not just the words. So, it does not just see words and images; it understands how they relate to each other in the document. The most practically innovative part is that it does all of this without needing the initial text-reading step (OCR). That means fewer chances for errors and a faster understanding of what the document is all about.